At the very least an Applescript could/should be provided to handle this requirement.Īn alternative thought is that maybe DT’s developers could strike up a strategic partnership with St Clair - developers of HistoryHound so that DT can make use of HH’s index.

My wish is that this type of conversion tool could be part of the main DT program for bulk converting existing bookmarks to Markdown formatted files. The DT developers already provide a bookmarklet for converting individual web pages to Markdown (via Brett Terpestra’s Markdownifier web service) and then automatically adding the converted page to DT. None of this would be necessary if the full text search capabilities of Pinboard (part of Pinboard’s premium archival service) functioned well but alas it doesn’t.

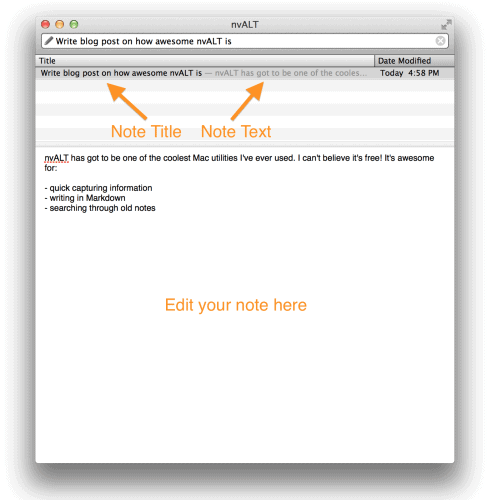

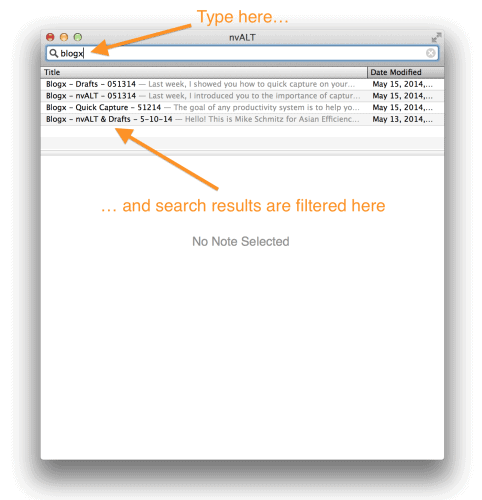

I’ve yet to find another desktop tool that can do the same thing. The main thing that I’m missing using this approach is the smart concordance based search features of DT - primarily the ‘see also’ feature, which has consistently provided me with wonderfully unexpected links in my data over the years. On that basis I made decision to use HistoryHound over DT as my tool for searching my Pinboard bookmarks as HH also has DT like sophisticated search logic that helps my locate relevant content. That same Pinboard data lives in HistoryHound (St Claire Software) and the index size for all 20k plus bookmarks is less than 100Mb (it only indexes the text content of each web page). HTML is the most efficient but that is a poor choice because the majority of the data is taken up by code markup. This workflow works well for me as the project documentation I produce (based on my knowledge database) is created via Brett Terpestra’s Marked app (and sometimes via Ulysses on my IOS device) which is effectively converting a HTML representation of my documentation to a CSS styled PDF/DOCx on the fly.īut the major problem I’m having at the moment is that the only way to get my (20K plus) Pinboard bookmark data into DT is to import the bookmarks and then face the ‘Hobson’s Choice’ of converting to HTML, webarchive, rich note or PDF which has a huge overhead as each bookmark ends up being on average 300k - 3Mb (depending on the format choice). I have approximately 5K Markdown notes that live in an nvALT folder and each note takes up a minimal amount of data as all of my image/rich resources reside on a separate web server (or increasingly direct links to OneNote or Flickr based images). I also have a huge PDF library hosted on OneDrive (ever since MS provided unlimited storage as part of their Office 365 package) and this is also indexed by DT and updates automatically in a seamless manner each time I relaunch DT. The reason I use this methodology is that it keeps the majority of my database lean and I have no problem making use of an index only approach to my data - that way I can use my Markdown editor’s of choice across both desktop and mobile to get data into my DT database. My knowledge database is primarily based on plain text (specifically Markdown formatted files).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed